| 2004 |

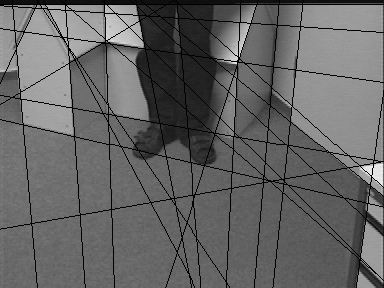

Pose Estimation of Free-form Objects

Rosenhahn B., Sommer G., Klette R.

Technical Report 0401, Christian-Albrechts-Universität zu Kiel, Institut für Informatik und Praktische Mathematik, März 2004

|

PDF, Bibtex |

| 2003 |

Pose Estimation Revisited

Rosenhahn B.

Dissertation, Institut für Informatik und Praktische Mathematik, Christian-Albrechts-Universität zu Kiel, 2003.

|

PDF, Bibtex, Abtract |

| 2002 |

Pose Estimation in Conformal Geometric Algebra, Part I: The Stratification of Mathematical Spaces, PartII: Real-time Pose Estimation us

ing Extended Feature Concepts

Rosenhahn B., Sommer G.

Technical Report 0206, Christian-Albrechts-Universität zu Kiel, Institut für Informatik und Praktische Mathematik, November 2

002

|

PDF, Bibtex |

| 2002 |

Adaptive pose estimation for different corresponding entities

Rosenhahn B., Sommer G.

In L. Van Gool, editor, Pattern Recognition, 24. Symposium für Mustererkennung, Zürich, September 2002, Vol. 2449 of LNCS, pp. 2

65-273. Springer-Verlag, Berlin Heidelberg, 2002

|

PDF, Bibtex |

| 2001 |

Learning-Based Robot Vision

Pauli J.

Springer-Verlag, Heidelberg, 2001

|

PDF, Bibtex |

| 2001 |

A unified description of multiple view geometry

Perwass C., Lasenby J.

In G. Sommer, editor, Geometric Computing with Clifford Algebra, pp. 337-369. Springer-Verlag, Heidelberg, 2001

|

PDF, PS, Bibtex |

| 2001 |

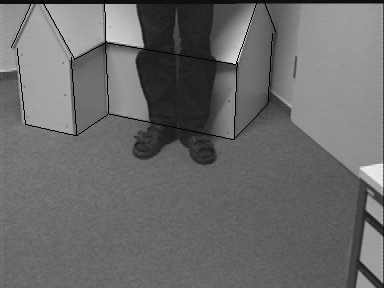

Tracking with a novel pose estimation algorithm

Rosenhahn B., Krüger N., Rabsch T., Sommer G.

In R. Klette, S. Peleg and G. Sommer, editors, International Workshop ``Robot Vision 2001ŽŽ Auckland, New-Zealand, Vol. 1998 of

LNCS, pp. 9-18. Springer-Verlag, 2001

|

PDF, PS, Bibtex |

| 2001 |

The motor extended Kalman filter for dynamic rigid motion estimation from line observations

Zhang Y., Sommer G., Bayro-Corrochano E.

In G. Sommer, editor, Geometric Computing with Clifford Algebra, pp. 501-530. Springer-Verlag, Heidelberg, 2001

|

PDF, PS, Bibtex |

| 2000 |

Development of Camera-Equipped Robot Systems

Pauli J.

Technical Report 9904, Christian-Albrechts-Universität zu Kiel, Institut für Informatik und Praktische Mathematik, August 2000

|

PDF, PS, Bibtex |

| 2000 |

Compatibilities for boundary extraction

Pauli J., Sommer G.

In G. Sommer, N. Krüger and C. Perwass, editors, 22. Symposium für Mustererkennung, DAGM 2000, Kiel, pp. 468-475. Springer-Verla

g, 2000

|

PDF, PS, Bibtex |

| 2000 |

Pose estimation in the language of kinematics

Rosenhahn B., Zhang Y., Sommer G.

In G. Sommer and Y. Zeevi, editors, 2nd International Workshop on Algebraic Frames for the Perception-Action Cycle, AFPAC 2000, Kiel

, Vol. 1888 of LNCS, pp. 284-293. Springer-Verlag, 2000

|

PDF, PS, Bibtex |

| 1999 |

The global algebraic frame of the perception-action cycle

Sommer G.

In B. Jähne, H. Haussecker and P. Geissler, editors, Handbook of Computer Vision and Applications, pp. 221-264. Academic Press,

San Diego, 1999

|

PDF, Bibtex |

| 1998 |

Geometric/photometric consensus and regular shape

quasi-invariants for object localization and boundary extraction

Pauli J.

Technical Report 9805, Christian-Albrechts-Universität zu Kiel, Institut für Informatik und Praktische Mathematik, Mai 1998

|

PDF, PS, Bibtex |